Back to Projects

1 / 14

NLP / Sentiment AnalysisCompleted

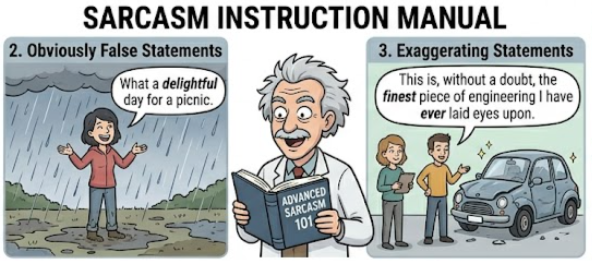

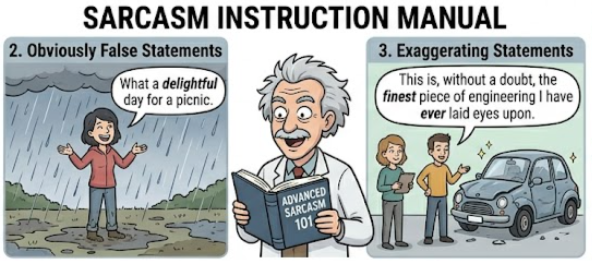

Sarcasm Detection on Reddit

2024

Comparative NLP study on 1.3M Reddit comments using Naive Bayes, TF-IDF + Logistic Regression, and DistilBERT fine-tuning — achieving 76.65% accuracy with transformer models.

AI/ML

Tech Stack

DistilBERTTF-IDFLogistic RegressionNaive BayesNLPPython

Key Highlights

- Conducted a comprehensive comparative study of sarcasm detection on 1.3 million Reddit comments, implementing three models: Naive Bayes (66.55%), TF-IDF + Logistic Regression (70.80%), and DistilBERT Transformer (76.65% accuracy).

- Performed extensive preprocessing including tokenization, stopword removal, and lemmatization; engineered n-gram features (unigrams and bigrams) for the statistical baseline models.

- Fine-tuned DistilBERT using HuggingFace Transformers with AdamW optimizer, linear warmup scheduler, and gradient clipping — demonstrating how bidirectional context outperforms statistical baselines.

- Both TF-IDF and DistilBERT achieved 100% accuracy on live Reddit comment prediction tests; provided evidence-based recommendations balancing performance, interpretability, and compute cost.

- Visualized token-level attention weights from DistilBERT to interpret which contextual cues (punctuation, intensifiers, semantic incongruence) contributed most to sarcasm classification.

- Structured the project as a reproducible research pipeline with modular preprocessing, training, and evaluation scripts, detailed in a final comparative analysis report.